- HADOOP INSTALLATION ON WINDOWS 7 INSTALL

- HADOOP INSTALLATION ON WINDOWS 7 UPDATE

- HADOOP INSTALLATION ON WINDOWS 7 DOWNLOAD

# cd $HADOOP_PREFIX/etc/hadoopĮnter the following line at beginning of the file.

HADOOP INSTALLATION ON WINDOWS 7 UPDATE

First, we need to update JAVA_HOME and Hadoop path in the hadoop-env.sh file as shown. Hadoop Configuration Files – core-site.xml, hdfs-site.xml, mapred-site.xml & yarn-site.xmlĨ.

In Hadoop, each service has its own port number and its own directory to store the data. We need to configure below Hadoop configuration files in order to fit into your machine. # source ~/.bashrcĬheck Hadoop Version in CentOS 7 Configuring Hadoop in CentOS 7 After adding environment variables to ~/.bashrc the file, source the file and verify the Hadoop by running the following commands. Next, add the Hadoop environment variables in ~/.bashrc file as shown.

HADOOP INSTALLATION ON WINDOWS 7 DOWNLOAD

Go to the Apache Hadoop website and download the stable release of Hadoop using the following wget command. SSH Passwordless Login to CentOS 7 Installing Hadoop in CentOS 7ĥ. After you configured passwordless SSH login, try to login again, you will be connected without a password. # ssh-keygenĬreate SSH Keygen in CentOS 7 Copy SSH Key to CentOS 7Ĥ. Set up a password-less SSH login using the following commands on the server. Even though it is singe node, we need to have password-less ssh to make Master to communicate Slave without authentication.ģ. In this single node, Master services ( Namenode, Secondary Namenode & Resource Manager) and Slave services ( Datanode & Nodemanager) will be running as separate JVMs. Otherwise for each connection establishment, need to enter the password. We need to set up password-less ssh so that the master can communicate with slaves using ssh without a password. Master node uses SSH connection to connect its slave nodes and perform operation like start and stop.

We need to have ssh configured in our machine, Hadoop will manage nodes with the use of SSH. Verify Java Version Configure Passwordless Login on CentOS 7 Next, verify the installed version of Java on the system.

HADOOP INSTALLATION ON WINDOWS 7 INSTALL

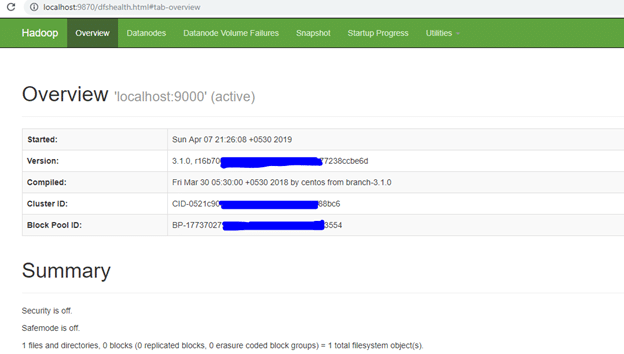

This article describes the process to install the Pseudonode installation of Hadoop, where all the daemons ( JVMs) will be running Single Node Cluster on CentOS 7. Mapreduce is the default processing engine of the Hadoop Eco-System. Storage will be taken care of by its own filesystem called HDFS ( Hadoop Distributed Filesystem) and Processing will be taken care of by YARN ( Yet Another Resource Negotiator). It consists of two-layer, one is for Storing Data and another one is for Processing Data. Most of the Bigdata/Data Analytics projects are being built up on top of the Hadoop Eco-System. Hadoop is an open-source framework that is widely used to deal with Bigdata.